The next big thing will start out feeling like Nostalgia

[this essay is best read while listening to this soundtrack]

A hot summer of year 2001

In the last year, agents have taken over. I don't know if this year's TIME person of the year has been awarded yet, but we have a winner already. Everything is now agentic. Every startup pitch that has some flavour of AI has to mention agents and variations of the word at least 5 times to evoke an investment.

So here I am, sitting in this highly-agentic-focused meeting and my mind starts to wander. All of this feels “not new” to me, but not in the sense that I have heard the exact same pitch a thousand times (I have, in fact). It’s just that I can't pinpoint the recurring motif. Those pitches click with something deeper - something that sparks nostalgia.

The other day I picked up Sebastian Mallaby’s The Infinity Machine and it all started to make sense. The first time I met agents I was almost too young to remember, and now I’m too old to forget. It was a hot evening of the Italian summer of the very early 2000s, and my 10-year-old mind was about to be forever changed by a game. The Sims.

But let’s go back to the 90s. We are actually in 1994 and this story starts with a theme park.

To understand why a theme park matters for AI, you need to appreciate innovation in gaming in that period. Life was simpler and two gaming cultures - diametrically opposed - were emerging.

The first one was the “joystick-based”, action-driven culture. The user acts and the world reacts, you survive or die. Pac-Man, Street Fighter, Super Mario.

The second cultural stream was about strategy. Players were not the protagonists of those games, but designers of systems and conditions in which the protagonists of the games lived. Peter Molyneux, founder of Bullfrog Productions and first employer of Demis Hassabis, called them god-games. His first major success, Populous (1989), cast players as literal deities.

The god-game family branched in many directions - from the empires of Civilisation and Age of Empires to the railways of Railroad Tycoon or SimCity - but one branch really matters for this story: behavioural simulations, where the point was not to win or optimise but to watch artificial people live.

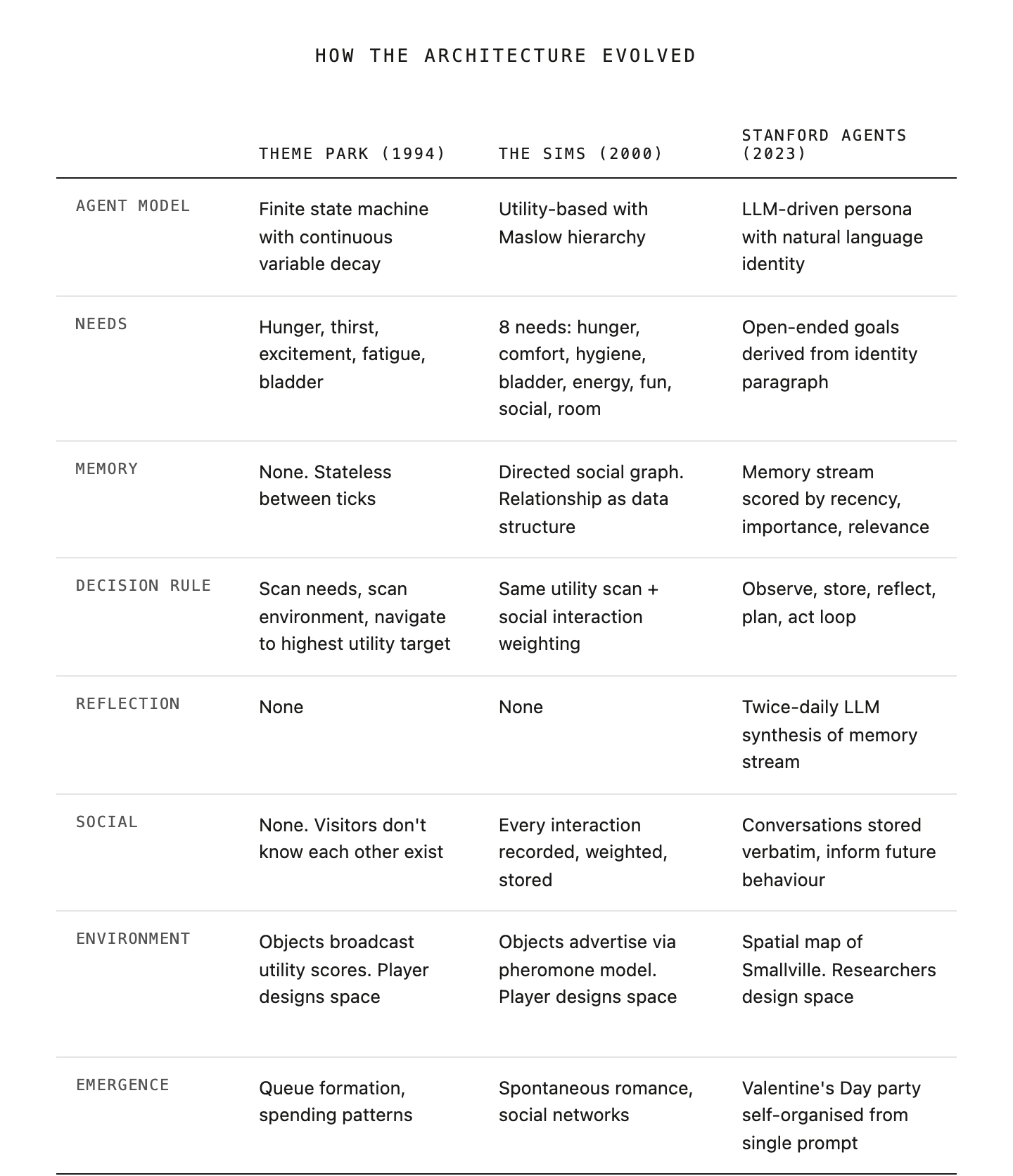

I argue that these games are the blueprint of AI. The agent architecture we now hear so often - needs, memory, decisions, autonomy - was designed in game studios 30 years ago. Millions of us grew up inside that architecture, and the nostalgia we feel today is just familiarity with that architecture.

The strange sense that we have been here before is not an illusion; it is recognition. And it all started with a theme park.

AI first sandbox - a drink kiosk & a queue

In 1993, a 17-year-old Demis Hassabis began working with Peter Molyneux on the development of what in retrospect was the first serious attempt to model human behaviour at scale. Even in Hassabis's words from 2022,

You can call them sandboxes even. […] [And] thousands of little people, AI people, came into your theme park and played on the rides, and depending on how well you designed the theme park, they were happy or less happy. […] It was a really interesting and formative experience for me, and not only professionally, but also demonstrating to me the power of AI.

Intentionally or not, they chose the perfect sandbox in a theme park. The fences make it bounded, so agents will act in a specific environment. The agents’ motivations are simple enough to model and observe, making it also legible. There is clear consequentiality, so agents' decisions produce measurable outcomes. And last but not least, it is repeatable indefinitely, making it more rigorous, as it can be run under different conditions.

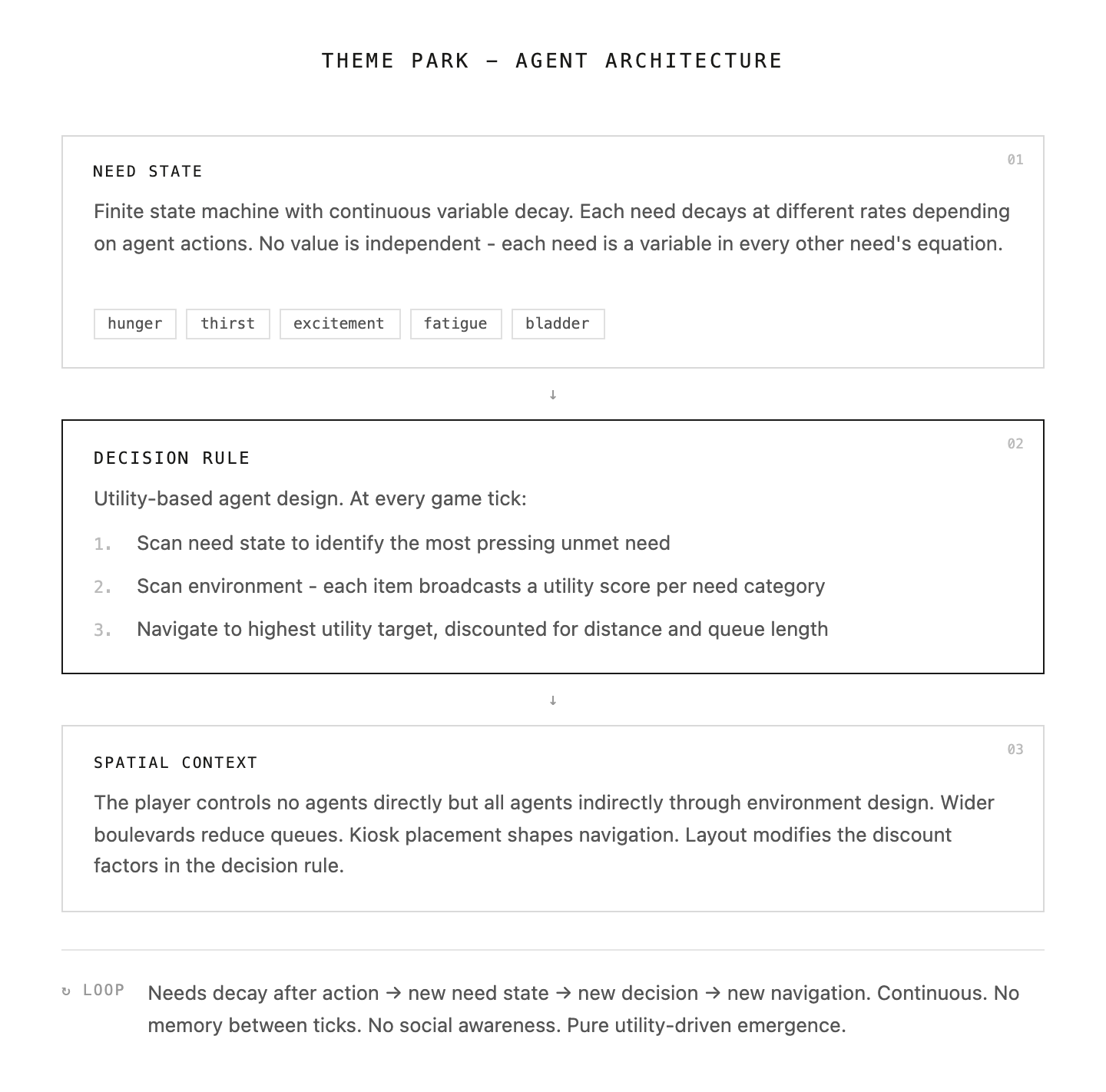

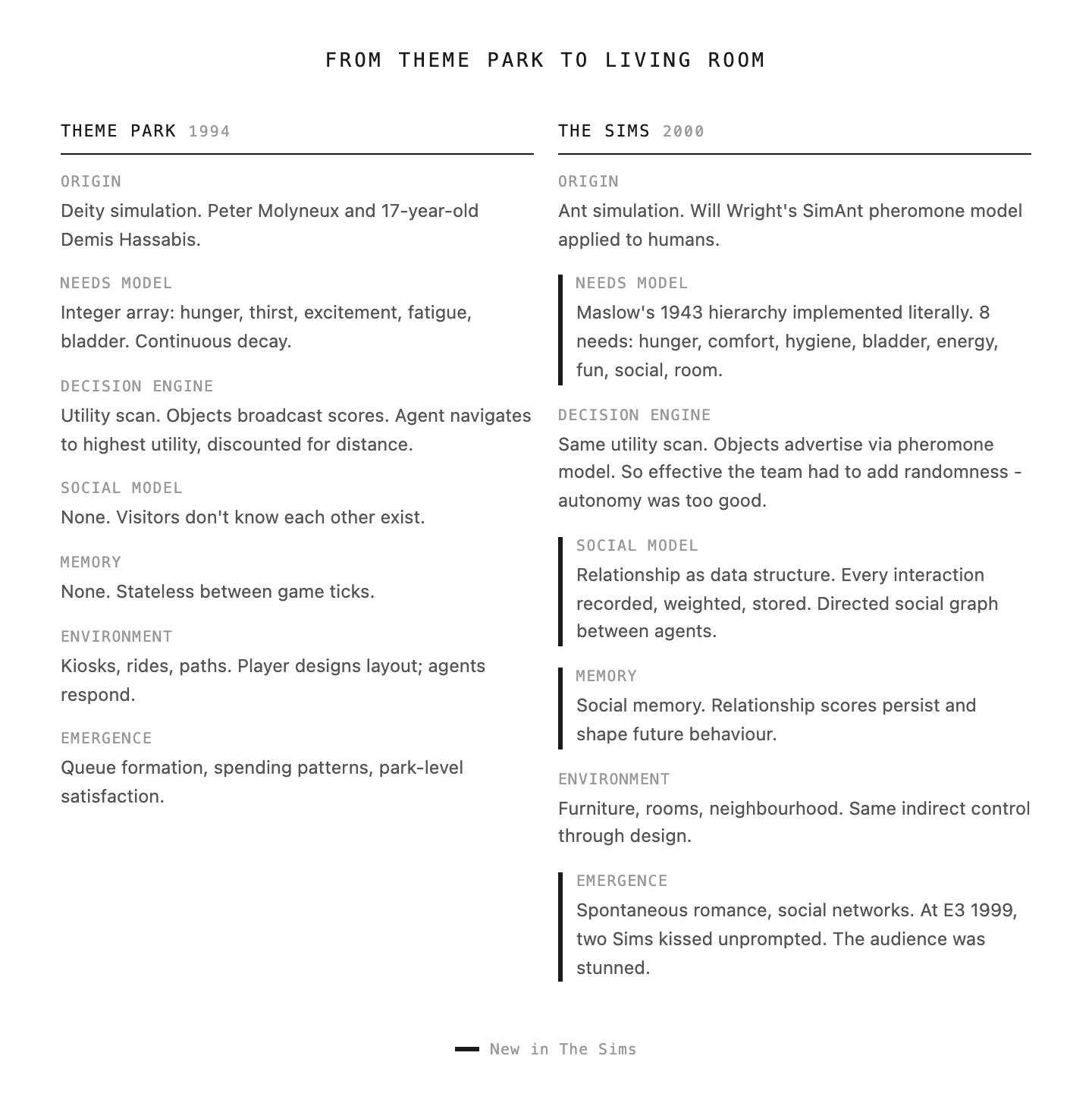

The architecture of this game is beautiful. The first component was the Need State, i.e. a finite state machine with continuous variable decay. Each visitor lived inside 200 bytes of memory. In those 200 bytes, each visitor carried an array of integer values across needs (hunger, thirst, excitement, fatigue, bladder). These values decayed continuously at different rates depending on agents' actions. Hunger decayed faster after a ride, thirst decayed faster after salty food. No single value was independent, so each need state was a variable in every other need’s equation.

The second component was the decision rule, and where the architecture presents clear seeds of AI. At every game tick, each agent would execute a series of actions: scan the need state to identify the most pressing unmet need; scan the environment, to identify the item in the environment that could satisfy the need. The item would broadcast a utility score for each need category; navigate towards the highest utility target, discounted for distance or queue. The technical name of this pattern is utility-based agent design, which will be replicated in The Sims and in modern agentic AI frameworks, where tools advertise capabilities to orchestrators.

The last component was the spatial context, which basically means that the player - who controls none of the agents directly - controls all of them indirectly through environment design. A wider boulevard allows for fewer queues, essentially reducing the discount factors we mentioned in the decision rule.

To me, there was little intentionality in the AI design process, but an incredibly simple human-based design of needs, decisions and spatial awareness that, for the first time, allowed them to model and experiment with the framework now used to design agents in 2026. A game, mainly built for fun and under strong engineering constraints, turned out to be a perfect sandbox for the development of AI. But a harder question was brewing: what happens when agents start to remember and fall in love?

Nothing will ever be the same

Time to go back to that hot Italian summer of the early 2000s. My cousin’s PC is making the noise of an A380 under the summer heat and we can only play after dinner. My uncle, a mathematics professor with a passion for puzzle games and simulation - my first memory of a game is Myst Exile - has just brought home this new game where you can build houses and put people in it. We spend the first three hours arranging furniture, choosing the best wallpaper, arguing over the position of the sofa and the TV. The music of the game is so pleasant I still remember it almost 30 years later, as I hope you are too. After putting together this beautiful living space, we press play and we open the doors.

Everything changes.

I watch my Sim walk around the house we built, sitting on the sofa we placed. He reads the newspaper and gets a job. We send him to meet the neighbours who so kindly come to say hi. He gets promoted, develops new skills, he even paints pictures. We are not playing him but watching him. We are orchestrators of conditions. And I am here cheering for an entirely artificial person, hoping he falls in love with his neighbours.

What I definitely did not know then was that I had just entered the most important sandbox in the history of AI. I had just become one of fifty million people who spent formative years in intimate contact with artificial agents. What we called playing a game was much more consequential than that.

To understand how we came to this, we need to quickly talk about ants. Yes, ants.

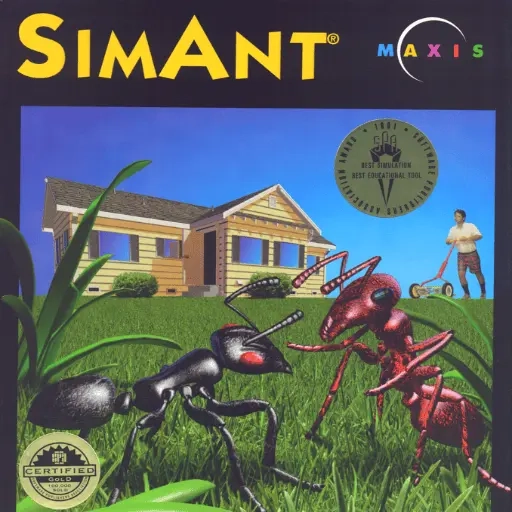

While Theme Park started from a deity, The Sims started from ants. Will Wright, magnificent systems thinker and designer of SimCity, was drawn to them in his ideation process.

[They] are one of the few real examples of intelligence we have that we can study and deconstruct. We're still struggling with the way the human brain works. But if you look at ant colonies, they sometimes exhibit a remarkable degree of intelligence […] We've been able to study social insects and really understand how that intelligence emerges from a collection of very simple little parts, to a degree that's far ahead of the understanding of the brain and its processes. That's, at the lowest level, what's always fascinated me about ants.

This research culminated in SimAnt, the precursor to The Sims. In the game, a human character was living in a house that the ants were trying to invade. But here is the interesting bit: while the humans were programmed the traditional way, i.e. through scripted behaviours and rules, the ants were programmed the same way as the theme park attendees, with emergence as a key design principle.

It was exactly this point that led to the evolution of SimAnt into The Sims.

I realised at the end of SimAnt that the simulation we built for the ants was almost more intelligent than for the guy, because the guy was being done using traditional programming, whereas the ants were done using this distributed environmental intelligence of the pheromone trails. (Will Wright)

There was also an incredible dose of serendipity. Wright originally set out to build an architecture evaluator - a tool for designing houses, with small virtual people whose job was to score the quality of your decisions. "The people were just there to score the architecture, and then everybody had so much fun just messing with the people that we doubled down on that.”

The rest is history.

Humans replaced ants. The pheromone trails in the environment that the ants were following became object advertisements in the environment (the kiosk of Theme Park versus the thirst need). Ants following the strongest pheromone became the Sim following the highest utility score.

The architecture worked so well it created a problem. The team had to deliberately introduce randomness into the decision rule, because anything players did was worse than letting the Sims run themselves.

The Sims built on the same utility-based architecture as Theme Park, but grounded it in formal psychology - Wright had read Maslow's 1943 paper and implemented the hierarchy literally. The real innovation, however, was the social interaction system. Theme Park visitors did not know each other existed. For the first time, relationship was a data structure. Every interaction between two Sims was recorded, weighted, and stored - a directed social graph between agents that made behaviour coherent over time and created psychology-based agents with genuine social memory.

We don’t appreciate enough how transformational this game was for the industry. Released in February 2000, it had become the best-selling PC game in history, overtaking Myst (that Myst my uncle was a fan of). On top of this, it was the first game that broke the demographic of gamers, with over fifty percent of players being women. The reason for this was that the thesis behind the Sims was about relationships, time, design, and the observation of artificial lives. This is strikingly similar to what we are seeing today with the adoption of AI, and the reason is that it was AI back then, we simply couldn’t see it back then.

There’s a telling episode that explains this concept of emergence in the game. At E3 in 1999, EA (which had acquired Maxis) had put The Sims in a corner of their booth. The team knew the conference was make or break - if the game didn’t generate interest, it would be cancelled.

They set up a wedding scene for the press, but literally ran out of time to fully script the guests’ behaviour. The ceremony was live, and the Sims could do whatever AI decided. During one of the showings, two female guests in the wedding audience turned to each other and kissed. Nobody had scripted it or intended it. The behaviour emerged from the social interaction code that the programmer had built.

The kiss became the talk of the conference. EA moved the game front and centre at their booth for the rest of the show. The real significance of that moment is not cultural though - it was the first time the mass market would see an emergent behaviour. It was the product of simple interaction rules running unsupervised inside a bounded environment. If that sounds a lot like agentic AI, that's because it is.

By 2005, over 52 million people had spent meaningful time inside The Sims. They created relationships with their agents, feeling genuine pleasure when they succeeded and seeing them live lives they could not live. These agents now had memory, interactions, actions, but still lacked reasoning. It would take another twenty-three years to give them a voice.

20 years later, The Sims go to University

In April 2023, a Stanford PhD student named Joon Sung Park uploaded a paper called Generative Agents: Interactive Simulacra of Human Behavior. In the first sentence of the abstract, he describes the experimental environment as a sandbox “reminiscent of The Sims”.

The paper introduced twenty-five agents living in a small town called Smallville, with its own cafe, park, college and houses. Each agent was given a single paragraph of identity: a name, age, job, a few relationships and habits.

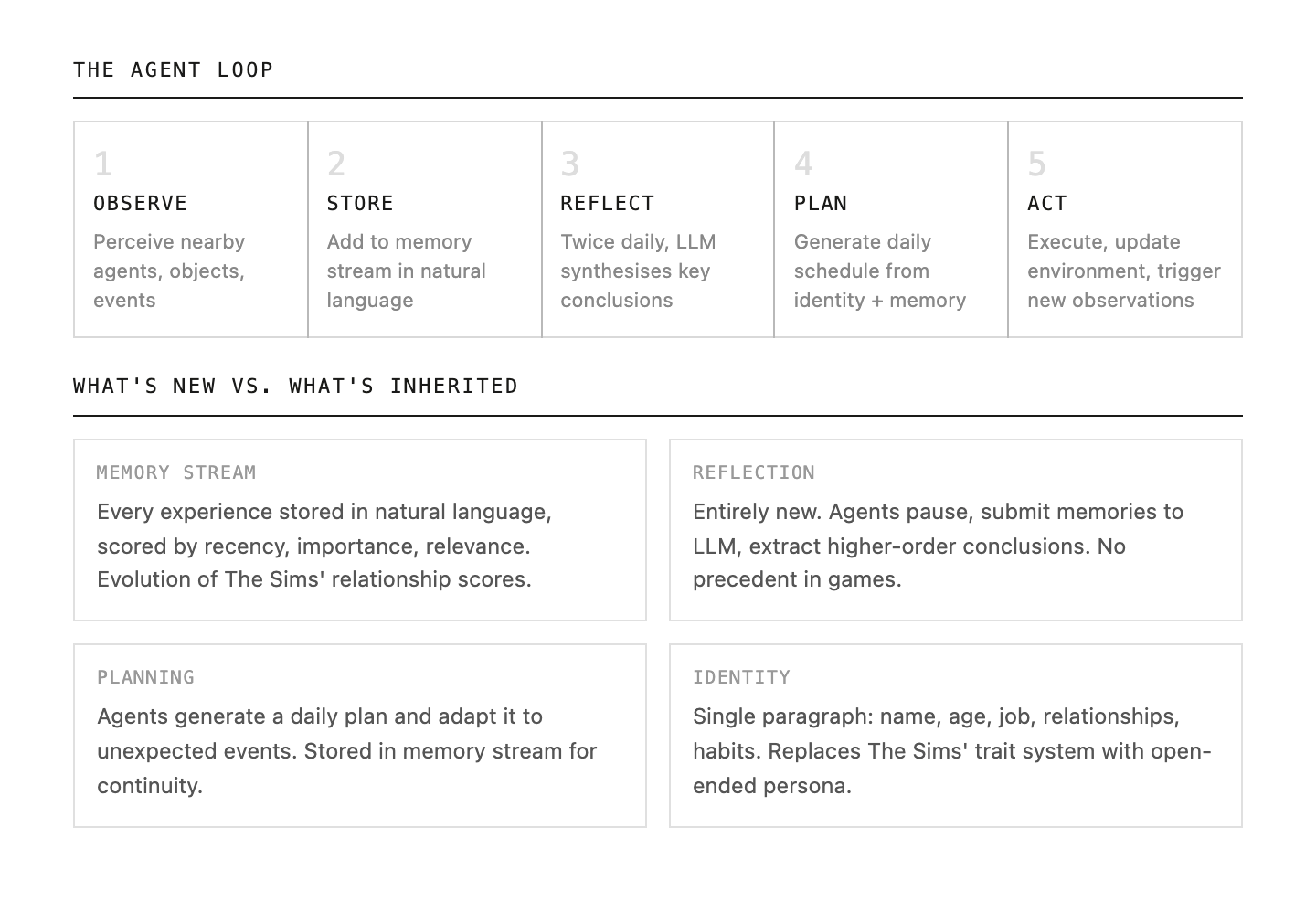

The architecture they built provided an evolution on The Sims. Memory stream was in there, but instead of a simple relationship score, the agent scored the memory based on recency, importance, and relevance - and crucially, in natural language. Reflection was also added. Theme Park's agents had needs. The Sims' agents had desires and social memory. Here, the agents would pause twice a day and submit their memories to an LLM to reflect on the most important conclusion from all the data gathered. Last but not least, planning. Using the first two components, agents generated a plan for the day and would store it in their memory stream for adaptation in light of unexpected circumstances.

The researchers were now orchestrating 25 agents running on continuous loops of observation, storing, reflections, planning, act, observe. A single intention was given to one of the agents: Isabella wants to throw a Valentine’s Day party.

Isabella started to tell friends about the party. Friends told others, acquaintances were made while spreading the invitation. On the day of the event, the guests arrived at the right place at the right time. Park described this result carefully: "We didn't design anything at a societal level. That's entirely up to the agent. If you create believable individual agents, collectively they should also behave like humans.” A strikingly similar way of describing things that we had seen twenty years earlier from Wright and almost forty years earlier from Molyneux.

The researchers went so far to also work on variations: one was the ablation, i.e. removing each of the components discussed before. The other one was to implement a human baseline - a crowdworker who was given full access to a specific agent’s memory stream and was asked to roleplay as that agent. The full architecture outperformed every ablated version, as expected. But quite unexpectedly instead, it out-performed also the human. A person who had read everything the agent had experienced, every conversation and interaction, performed worse at being that agent than the agent itself.

The paper gathered thousands of citations and became one of the most influential AI papers of the decade, essentially serving as the first spillover of the work pioneered by Hassabis and Molyneux into proper AI research. Its architectural patterns of personas, memory, observation-plan-act loop have since become the standard template for agent simulation. Any serious agentic framework built after 2023 contains some variations of this loop.

Looking forward at future nostalgias

Back to the present. Here I am, back in this meeting listening to pitches about agents, orchestrators and why-nows. The nostalgia is now much clearer in my mind: it is now recognition. The recognition that I lived this paradigm before it was called AI. Fifty-two million of us had. We were not beta testers or early adopters of AI, we were just playing a game. In fact, that is exactly what we were. Nobody knew it.

The pattern is hard to ignore once seen. A16Z’s Chris Dixon once said the most consequential technologies are generally dismissed as toys, but my view is slightly different. The most consequential technologies don’t get dismissed at all, people enjoy them, fully and without reservation, with no idea what they are actually interacting with. The technology is inside the experience but invisible as technology. And nostalgia is the proof that we have lived through an embryonic phase of something that materialises today. It is recognition.

What are the sandboxes of today? What will drive nostalgia 20 years from now in the emergence of new technologies?

I can see some candidates. In gaming, Minecraft is being played by hundreds of millions of teenagers who are building and collaborating inside programmable worlds, training spatial and systems intuition they don't know they have.

In biology, over a billion people are passively feeding health data to wearable devices, seeding the preventative healthcare systems of the future without a single conscious decision.

In finance, Roblox's 150 million users trade digital goods daily, run virtual shops, and develop intuition for dynamic pricing and digital scarcity, thinking they are playing a game. It’s the same: the technology is inside the experience but invisible as technology.

I don’t know if any of these predictions will hold or if the current sandboxes are others. I would love to know what will drive nostalgia of this period 20 years from now. Maybe it's something so familiar I can't even see it while writing this list. And maybe that is exactly the point. Back in that room in the 2000s, on a hot Italian summer, I was just having fun. Little did I know I was meeting the biggest innovation of this century for the first time.

Sources

- Sebastian Mallaby, The Infinity Machine: Demis Hassabis, DeepMind, and the Quest for Superintelligence (Penguin Press, 2026)

- Demis Hassabis, interview on The TED Interview podcast with Chris Anderson, July 28, 2022. Transcript

- Will Wright, interview with Keith Phipps, The A.V. Club, 2005. Link

- Will Wright, interview with John Romero at IGDA Leadership Forum, November 2010. Via Engadget

- Will Wright, The Replay Interviews, Game Developer, November 2023. Link

- Will Wright on The Sims' AI autonomy, PC Gamer, February 2025. Link

- Abraham Maslow, "A Theory of Human Motivation", Psychological Review, 50(4), 1943

- Joon Sung Park, Joseph C. O'Brien, Carrie J. Cai, Meredith Ringel Morris, Percy Liang, Michael S. Bernstein, "Generative Agents: Interactive Simulacra of Human Behavior", Stanford University, April 2023. arXiv:2304.03442

- Simon Parkin, "The Kiss That Saved The Sims", The New Yorker, July 2014

- Chris Dixon, "The next big thing will start out looking like a toy", cdixon.org, January 2010